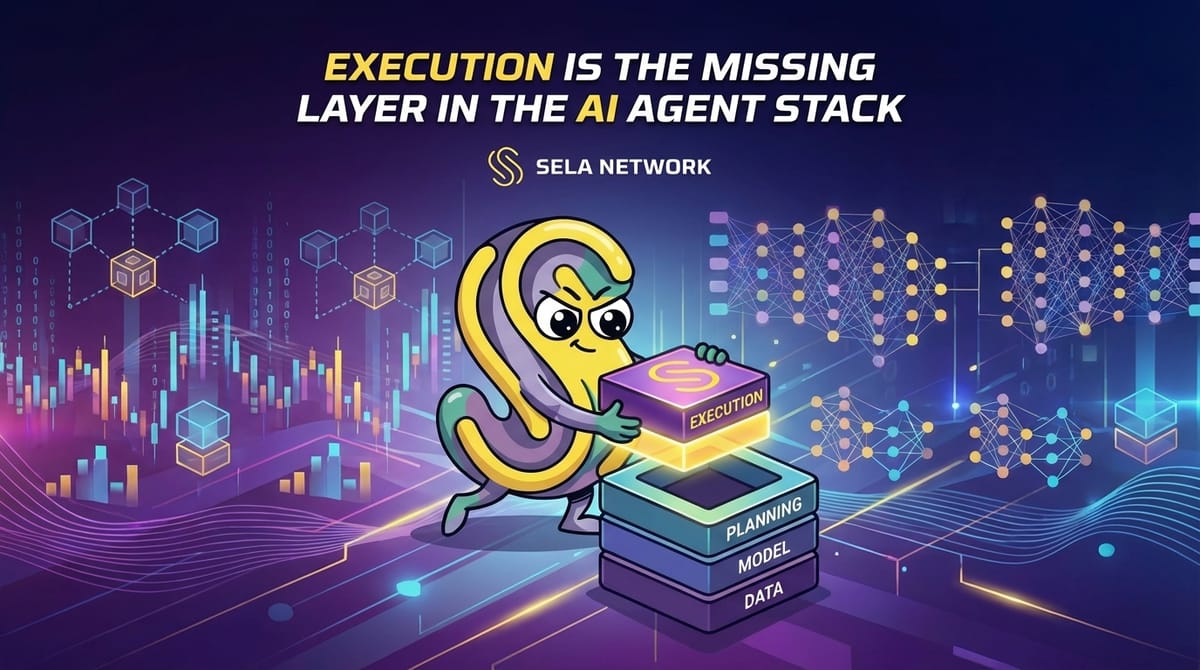

Execution Is the Missing Layer in the AI Agent Stack

Anyone who has tried to connect an AI agent to the real web has probably felt this gap.

The model is capable.

The reasoning makes sense.

In isolation, everything works.

But once the agent is wired into a live website, things start to unravel.

Flows that worked yesterday suddenly fail.

A small UI change breaks the entire process.

A login hangs.

Selectors stop matching.

Logs exist, but they stop explaining what actually went wrong.

At that point, most teams ask the same questions.

Is the model still not good enough?

Do we need better prompting?

More guardrails? More retries?

In practice, the issue is rarely the model.

The problem is execution.

Agents Can Decide — They Struggle to Act

Modern AI agents are no longer the bottleneck when it comes to thinking.

They can interpret goals, compare options, decompose tasks, and adapt when conditions change.

Decision-making has largely converged.

Failures almost always happen after the decision is made.

The agent knows what to do.

It just can’t reliably do it on the web.

This isn’t because the agent makes a wrong choice.

It’s because the environment doesn’t let it proceed.

The Web Was Never Built for Machines

The web assumes a human user.

Someone looking at a screen.

Clicking elements.

Typing into fields.

Resolving ambiguity with context and intuition.

When an AI agent enters this environment, friction appears immediately.

Bot detection.

CAPTCHAs and secondary verification.

Constantly shifting DOM structures.

JavaScript-heavy SPAs with hidden state.

Fragile session and fingerprint logic.

From a developer’s perspective, these are “edge cases.”

From the agent’s perspective, they are hard stops.

Why Patching Automation Never Converges

Most teams treat execution failures as implementation details.

They patch selectors.

Add retries.

Ship hotfixes.

But this approach never stabilizes.

The web is dynamic, defensive, and actively hostile to automation.

No amount of patching changes that.

This isn’t a code quality issue.

It’s a structural mismatch.

Execution Is Not a Feature — It’s Infrastructure

At some point, the framing has to change.

Execution isn’t something you bolt onto an agent.

It isn’t a clever script or a better retry strategy.

Execution is infrastructure.

Reliable execution requires:

- A real browser environment

- Stable rendering and interaction behavior

- Persistent sessions and state

- Clear definitions of success and failure

- Verifiable outcomes

Trying to solve this purely at the application layer leads to fragile systems and endless maintenance.

Separating Decision-Making From Execution

This is the assumption Sela Network starts from.

AI agents already know what to do.

What they lack is a reliable way to do it on the real web.

Instead of pushing more complexity into the agent, Sela separates concerns:

- The agent handles reasoning and decisions

- Sela handles execution in real browser environments

This separation simplifies both sides.

Agents don’t need to understand the messiness of the web.

The execution layer focuses on one thing only:

making sure the task actually completes.

Why a Distributed Browser Network Matters

Most automation stacks run from centralized infrastructure.

That creates predictable patterns.

Fixed regions.

Single points of failure.

But the web itself isn’t centralized.

It’s global, heterogeneous, and inconsistent by nature.

Sela operates a distributed network of real browser nodes, spread across regions and environments.

Not to “evade” the web — but to match it.

When execution conditions resemble real user behavior, stability improves dramatically.

Completion, Not Access, as the Metric

Traditional automation tools often define success as:

- The page loaded

- The request succeeded

Real work doesn’t function that way.

What matters is:

- Did the flow finish?

- Was the intended state actually changed?

- Is there a verifiable result?

Sela is built around task completion, not surface-level access.

Execution Should Be Provable

As AI agents move into real operational roles, trust becomes an issue.

“It says it’s done” is no longer enough.

Sela records execution paths — requests, transitions, and interactions — creating a verifiable record of what actually happened.

Not for show.

For accountability.

Why Nodes Matter

All of this only works because there is real execution capacity behind it.

Sela’s browser network is powered by nodes — real environments that execute tasks on behalf of AI agents.

Running a Sela node isn’t just about supporting a network.

It’s about participating in the execution layer AI agents increasingly depend on.

If AI is going to operate on the human web,

that execution layer has to exist somewhere.

This is where it’s being built.

Execution Is Becoming the Differentiator

Models are converging.

Reasoning is improving everywhere.

The systems that matter next won’t be judged by demos,

but by whether they can run continuously, reliably, and at scale.

Execution infrastructure is no longer optional.

Sela Network is building that layer — one node at a time.

The web was built for humans.

If AI agents are going to work there too,

execution has to change.

If you want to be part of that shift,

run a Sela node and help power the execution layer the next generation of agents will rely on.